|

|

|

|

|

|||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

Introduction to GEMDMGEMDM is a Distributed Memory version of GEM

The Distributed Memory (DM) implementation of the GEM model is one

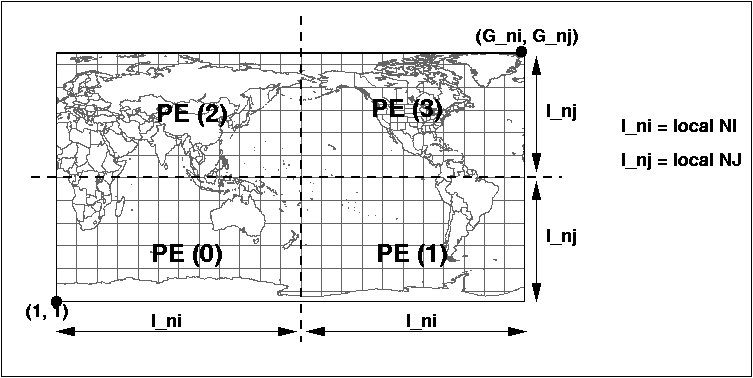

whereby a global domain of dimension G_ni x G_nj is split into

subdomains of dimension L_ni x L_nj using a regular block partitioning

technique. This partitioning is itself based on a user choice of

'Ptopo_npex' number of processors to split G_ni and 'Ptopo_npey'

number of processors to split G_nj. This creates an array of subdomains

to which we match an array of processors known as a 'processor

topology' of (Ptopo_npex x Ptopo_npex). Each processor will compute

only on its own local subdomain of dimension L_ni x L_nj.

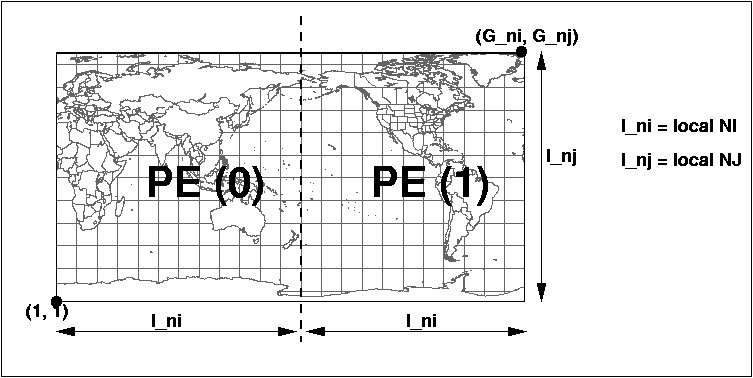

A processor topology of (2x1) ie: Ptopo_npex=2 and Ptopo_npey=1 would look like this:

Note that each PE will see a different value for its own

l_ni,l_nj. The l_ni and l_nj values are determined at run-time based

on the processor topology and number of gridpoints for the global

domain (G_ni,G_nj). In the example above, the l_nj for PE 0 and PE 1

are equal to G_nj.

This DM implementation of GEMDM uses the Message Passing Interface

(MPI) library. In this context, there will be

n = (Ptopo_npex X Ptopo_npey) exact copies of the main program launched

at once at startup. Those are known as the MPI processes to which a

PE will typically be assigned. Through a serie of initial communications

each PE obtains its rank and its position within the processor topology.

Data decomposition can thereafter take place and the computation will

start immediately after.

Note that arrays with halos are formally shaped the following way:

NAMING and CODING conventions in GEMDM

The routines, functions and comdecks are named to group

towards related operations. Please see the naming and coding conventions

of routines and variables specified in this document:

revisions_doc/code_stds_gemdm.html

GEMDM ENVIRONMENT The very first thing one must do to work in the GEMDM environment is to set/add the PATH to the proper version. This is done by issuing the command:

For example:

(2) the absolute (executable) files: maingemntr[ARCH]_3.1.1.Abs, maingemdm[ARCH]_3.1.1.Abs

These last two items take alot of space and do not need backup. It is

suggested very strongly to create soft links for the directories and

executables pointing to another disk system to avoid overflowing the quota.

(3) directories for interactive runs only: output, process,

For creating absolutes:

( in POLLUX, you would obtain maingemntrIRIX64_3.1.1.Abs, maingemdmIRIX64_3.1.1.Abs )

( in AZUR, you would obtain maingemntrAIX_3.1.1.Abs )

( in Linux, you would obtain maingemdmLinux_3.1.1.Abs )

For creating absolutes with modifications to the code:

ie: make rhs.o nli.o (This will place the 'rhs.o' and 'nli.o' into malibIRIX64 if compiling on POLLUX) If you have modified a routine with changes in dependencies (ie: added or deleted comdecks), then you must redo the Makefile before re-compiling as follows:

make [routine1].o [routine2.o] If you have modified the comdecks themselves then, you must redo the Makefile, remove the old '*.o' (for coherency) and compile all the routines affected by the modified comdecks by:

r.make_exp (rebuild Makefile) rm malib[ARCH]/*.o (removes all the old '*.o') make objloc (creates all new '*.o') Example for Linux:

r.make_exp cd malibLinux \rm *.o cd .. make objloc Then after the compilations, rebuild the absolute as shown above:

For further details in compilations and building

absolutes, see documentation on r.compile, r.build in the RPN website:

http://http://iweb.cmc.ec.gc.ca/rpn/mrb/si/eng/si/utilities .

HOW TO RUN GEMDM

You can obtain a copy of the sample debug configuration files

gem_settings.nml, outcfg.out and configexp.dot.cfg

by running the script "gem_config dummy".

This will produce a sub-directory

"dbg1_configs" containing these 3 files.

(2)outcfg.out is to control the output of the model (3)configexp.dot.cfg is to control where the model will be launched, chosen initial analysis, climatology files, max memory, cpu time, etc.

One can find documentation

on the configuration files for gem_settings.nml and outcfg.out

in a file called gem_settings.doc stored in the RCS. It can

be extracted by typing:

One can find all the scripts related to a particular version

of GEMDM in $gem/scripts

The above will use default files. Here is an example in

using specific analysis or climatology files:

The output of a run (Um_runmod.sh) will be found in the sub-directory "output"

in the form of binary slab files. To convert them to RPN standard files, execute

the following scripts above the directory output:

An example if the slabfiles are saved in another directory name other

than output such as output2, and, the run is Ptopo_npex=1, Ptopo_npey=2:

A file is produced by each PE if the local domain contains part of the output grid. For visualization, these slab must be post-processed with a program called "delamineur2000" (inside script delam ) to convert the files to RPN Std '#' grid files (See http://iweb.cmc.ec.gc.ca/rpn/mrb/si/eng/si/misc/grilles.html#diese)

If the run was done with more than 1 PE, each '#' RPN file displays

only what each PE 'sees' as its local domain. To visualize the 'complete' grid,

one must reassemble the '#' grid files to one 'Z' grid file by using the

program bemol2000 (inside script d2z ) (See

http://iweb.cmc.ec.gc.ca/rpn/mrb/si/eng/si/utilities/bemol/index.html.

RUNNING BATCH MODE

The launching scripts will require ${HOME}/gem to already exist. The purpose of this is to force the user to provide space for the execution of the model through a proper link of directory ${HOME}/gem. For example:

Example:

ln -s /fs/mrb/02/armn/armnviv azur (for azur) ln -s /data/dormrb03/armn/armnviv/pollux pollux (for pollux) ln -s /data/local2/armn/armnviv/lorentz lorentz (for lorentz) Example:

cd listings ln -s /fs/mrb/02/armn/armnviv/listings azur (for azur) ln -s /data/local2/armn/armnviv/lorentz/listings lorentz (for lorentz) Some hints on its controls:

model=[gem/mc2]; version=3.1.1; t=[number of seconds for wall clock]; d2z=1; to automatically re-assemble output mach=[machine];(azur,pollux,lorentz) listing=/users/dor/armn/viv/listings;

Then, to launch the model in batch

mode on any platform or backend, execute the script

where [exp] is the name of a subdirectory

(like dbg1_configs) containing files gem_settings.nml, outcfg.out and

configexp.dot.cfg. KEEPING INFORMED ON CHANGES!!

To be kept informed of current developments and problem-solving related

to GEMDM, one

should subscribe to the "gem" mailing list by sending an e-mail to

"Majordomo@cmc.ec.gc.ca" with the line:

GEM in LAM CONFIGURATION For those who want to try GEM in a Limited Area Modelling LAM configuration, here is an example of a LAM grid definition. Complete details of the namelist variables are described in gem_settings.doc

For more information on LAM, refer to: lam seminar authors: V.Lee, M.Desgagné (March 2003) |

||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||